Our house has roller shutters on all windows and most of them have an electric motor to open and close them. The motors are Schellenberg shaft motors (not sure this is the right English term), that you can configure end positions for and then by applying current to the right pair of pins they move the shutters up and down until the current is removed or one of the endstops is reached. As the motors were installed over a long period of time, the attached control units varied in functionality: different ways to program open/close times, with and without battery for the clock/settings etc, but they all had something in common: no central management and pretty ugly. We decided to replace those control units with regular 2 gang light switches that match the other switches in the house. And as we didn't want to lose the automatic open/close timer, we had to add some smarts to it.

Shelly 2.5 and ESPHome

Say hello to

Shelly 2.5! The Shelly 2.5 is an ESP8266 with two relays attached to it in a super tiny package you can hide in the wall behind a regular switch. Pretty nifty. It originally comes with a

Mongoose OS based firmware and an own app, but ain't nobody needs that, especially nobody who wants to implement some crude logic. That said, the original firmware isn't bad. It has a no-cloud mode, a REST API and does support MQTT for integration into Home Assistant and others.

My ESP firmware of choice is

ESPHome, which you can flash easily onto a Shelly (no matter 1, 2 or 2.5) using a USB TTL adapter that provides 3.3V. Home Assistant has native ESPHome support with auto-discovery, which is much nicer than manually attaching things to MQTT topics.

To get ESPHome compiled for the Shelly 2.5, we'll need a basic configuration like this:

esphome:

name: shelly25

platform: ESP8266

board: modwifi

arduino_version: 2.4.2

We're using

board: modwifi as the Shelly 2.5 (and all other Shellys) has 2MB flash and the usually recommended

esp01_1m would only use 1MB of that - otherwise the configurations are identical (see the PlatformIO entries for

modwifi and

esp01_1m). And

arduino_version: 2.4.2 is what the Internet suggests is the most stable SDK version, and who will argue with the Internet?!

Now an ESP8266 without network connection is boring, so we add a WiFi configuration:

wifi:

ssid: !secret wifi_ssid

password: !secret wifi_password

power_save_mode: none

The

!secret commands load the named variables from

secrets.yaml. While testing, I found the network connection of the Shelly very unreliable, especially when placed inside the wall and thus having rather bad bad reception (-75dBm according to ESPHome). However, setting

power_save_mode: none explicitly seems to have fixed this, even if

NONE is supposed to be the default on ESP8266.

At this point the Shelly has a working firmware and WiFi, but does not expose any of its features: no switches, no relays, no power meters.

To fix that we first need to find out the GPIO pins all these are connected to. Thankfully we can basically copy paste the definition from the

Tasmota (another open-source firmware for ESPs)

template:

pin_led1: GPIO0

pin_button1: GPIO2

pin_relay1: GPIO4

pin_switch2n: GPIO5

pin_sda: GPIO12

pin_switch1n: GPIO13

pin_scl: GPIO14

pin_relay2: GPIO15

pin_ade7953: GPIO16

pin_temp: A0

If we place that into the

substitutions section of the ESPHome config, we can use the names everywhere and don't have to remember the pin numbers.

The configuration for the

ADE7953 power sensor and the

NTC temperature sensor are taken verbatim from the ESPHome documentation, so there is no need to repeat them here.

The configuration for the switches and relays are also rather straight forward:

binary_sensor:

- platform: gpio

pin: $ pin_switch1n

name: "Switch #1"

internal: true

id: switch1

- platform: gpio

pin: $ pin_switch2n

name: "Switch #2"

internal: true

id: switch2

switch:

- platform: gpio

pin: $ pin_relay1

name: "Relay #1"

internal: true

id: relay1

interlock: &interlock_group [relay1, relay2]

- platform: gpio

pin: $ pin_relay2

name: "Relay #2"

internal: true

id: relay2

interlock: *interlock_group

All marked as

internal: true, as we don't need them visible in Home Assistant.

ESPHome and Schellenberg roller shutters

Now that we have a working Shelly 2.5 with ESPHome, how do we control Schellenberg (and other) roller shutters with it?

Well, first of all we need to connect the Up and Down wires of the shutter motor to the two relays of the Shelly. And if they would not be marked as

internal: true, they would show up in Home Assistant and we would be able to flip them on and off, moving the shutters. But this would also mean that we need to flip them off each time after use, as while the motor knows when to stop and will do so, applying current to both wires at the same time produces rather

interesting results. So instead of fiddling around with the relays directly, we define a

time-based cover in our configuration:

cover:

- platform: time_based

name: "$ location Rolladen"

id: rolladen

open_action:

- switch.turn_on: relay2

open_duration: $ open_duration

close_action:

- switch.turn_on: relay1

close_duration: $ close_duration

stop_action:

- switch.turn_off: relay1

- switch.turn_off: relay2

We use a time-based cover because that's the easiest thing that will also turn the relays off for us after the shutters have been opened/closed, as the motor does not "report" any state back. We

could use the integrated power meter of the Shelly to turn off when the load falls under a threshold, but I was too lazy and this works just fine as it is.

Next, let's add the physical switches to it. We could just add

on_press automations to the binary GPIO sensors we configured for the two switch inputs the Shelly has. But if you have kids at home, you'll know that they like to press ALL THE THINGS and what could be better than a small kill-switch against small fingers?

switch:

[ previous definitions go here ]

- platform: template

id: block_control

name: "$ location Block Control"

optimistic: true

- platform: template

name: "Move UP"

internal: true

lambda: -

if (id(switch1).state && !id(block_control).state)

return true;

else

return false;

on_turn_on:

then:

cover.open: rolladen

on_turn_off:

then:

cover.stop: rolladen

- platform: template

name: "Move DOWN"

internal: true

lambda: -

if (id(switch2).state && !id(block_control).state)

return true;

else

return false;

on_turn_on:

then:

cover.close: rolladen

on_turn_off:

then:

cover.stop: rolladen

This adds three more

template switches.

The first one, "Block Control", is exposed to Home Assistant, has no

lambda definition and is set to

optimistic: true, which makes is basically a dumb switch that can be flipped at will and the only thing it does is storing the binary on/off state.

The two others are almost identical. The name differs, obviously, and so does the

on_turn_on automation (one triggers the cover to open, the other to close). And the really interesting part is the

lambda that monitors one of the physical switches and if that is turned on,

plus "Block Control" is off, reports the switch as turned on, thus triggering the automation.

With this we can now block the physical switches via Home Assistant, while still being able to control the shutters via the same.

All this (and a bit more) can be found in my

esphome-configs repository on GitHub, enjoy!

This is a fairly self-indulgent post, sorry!

Encouraged by Evgeni,

Michael and

others, given I'm spending a lot more time at my desk in my home office, here's

a picture of it:

Near enough everything in the study is a work in progress.

The KALLAX behind my desk is a recent addition (under duress) because we have

nowhere else to put it. Sadly I can't see it going away any time soon. On the

up-side, since Christmas I've had my record player and collection in and on top

of it, so I can listen to records whilst working.

The arm chair is a recent move from another room. It's a nice place to take

some work calls, serving as a change of scene, and I hope to add a reading

light sometime. The desk chair is some Ikea model I can't recall, which is

sufficient, and just fits into the desk cavity. (I'm fairly sure my Aeron,

inaccessible elsewhere, would not fit.)

I've had this old mahogany, leather-topped desk since I was a teenager and it's

a blessing and a curse. Mostly a blessing: It's a lovely big desk. The main

drawback is it's very much not height adjustable. At the back is a custom made,

full-width monitor stand/shelf, a recent gift produced to specification by my

Dad, a retired carpenter.

On the top: my work Thinkpad T470s laptop, geared more towards portable than

powerful (my normal preference), although for the forseeable future it's going

to remain in the exact same place; an Ikea desk lamp (I can't recall the

model); a 27" 4K LG monitor, the cheapest such I could find when I bought it;

an old LCD Analog TV, fantastic for vintage consoles and the like.

Underneath: An Alesis Micron 2 octave analog-modelling synthesizer; various

hubs and similar things; My Amiga 500.

Like Evgeni, my normal keyboard is a ThinkPad Compact USB Keyboard with

TrackPoint.

I've been using different generations of these styles of keyboards for a long

time now, initially because I loved the trackpoint pointer. I'm very curious

about trying out mechanical keyboards, as I have very fond memories of my

first IBM Model M buckled-spring keyboard, but I haven't dipped my toes into

that money-trap just yet. The Thinkpad keyboard is rubber-dome, but it's a

good one.

Wedged between the right-hand bookcases are a stack of IT-related things: new

printer; deprecated printer; old/spare/play laptops, docks and chargers;

managed network switch; NAS.

This is a fairly self-indulgent post, sorry!

Encouraged by Evgeni,

Michael and

others, given I'm spending a lot more time at my desk in my home office, here's

a picture of it:

Near enough everything in the study is a work in progress.

The KALLAX behind my desk is a recent addition (under duress) because we have

nowhere else to put it. Sadly I can't see it going away any time soon. On the

up-side, since Christmas I've had my record player and collection in and on top

of it, so I can listen to records whilst working.

The arm chair is a recent move from another room. It's a nice place to take

some work calls, serving as a change of scene, and I hope to add a reading

light sometime. The desk chair is some Ikea model I can't recall, which is

sufficient, and just fits into the desk cavity. (I'm fairly sure my Aeron,

inaccessible elsewhere, would not fit.)

I've had this old mahogany, leather-topped desk since I was a teenager and it's

a blessing and a curse. Mostly a blessing: It's a lovely big desk. The main

drawback is it's very much not height adjustable. At the back is a custom made,

full-width monitor stand/shelf, a recent gift produced to specification by my

Dad, a retired carpenter.

On the top: my work Thinkpad T470s laptop, geared more towards portable than

powerful (my normal preference), although for the forseeable future it's going

to remain in the exact same place; an Ikea desk lamp (I can't recall the

model); a 27" 4K LG monitor, the cheapest such I could find when I bought it;

an old LCD Analog TV, fantastic for vintage consoles and the like.

Underneath: An Alesis Micron 2 octave analog-modelling synthesizer; various

hubs and similar things; My Amiga 500.

Like Evgeni, my normal keyboard is a ThinkPad Compact USB Keyboard with

TrackPoint.

I've been using different generations of these styles of keyboards for a long

time now, initially because I loved the trackpoint pointer. I'm very curious

about trying out mechanical keyboards, as I have very fond memories of my

first IBM Model M buckled-spring keyboard, but I haven't dipped my toes into

that money-trap just yet. The Thinkpad keyboard is rubber-dome, but it's a

good one.

Wedged between the right-hand bookcases are a stack of IT-related things: new

printer; deprecated printer; old/spare/play laptops, docks and chargers;

managed network switch; NAS.

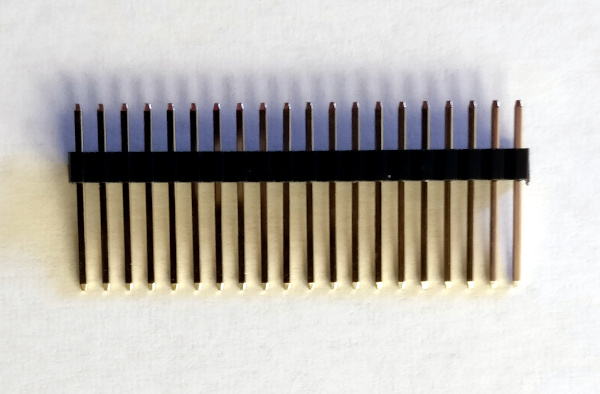

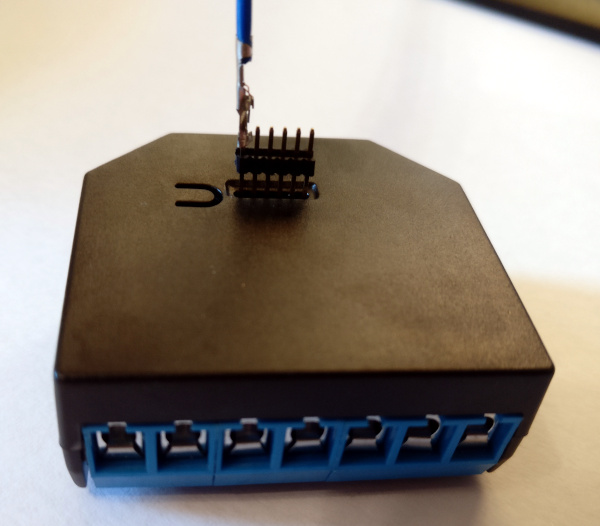

However, on closer inspection you'll notice that your normal jumper wires don't fit as the Shelly has a connector with 1.27mm (0.05in) pitch and 1mm diameter holes.

Now, there are various tutorials on the Internet how to build a compatible connector using

However, on closer inspection you'll notice that your normal jumper wires don't fit as the Shelly has a connector with 1.27mm (0.05in) pitch and 1mm diameter holes.

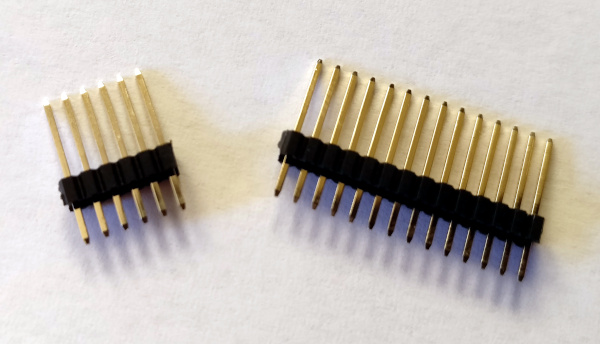

Now, there are various tutorials on the Internet how to build a compatible connector using  The first step is to cut the header into 6 pin chunks. Make sure not to cut too close to the 6th pin as the whole thing is rather fragile and you might lose it.

The first step is to cut the header into 6 pin chunks. Make sure not to cut too close to the 6th pin as the whole thing is rather fragile and you might lose it.

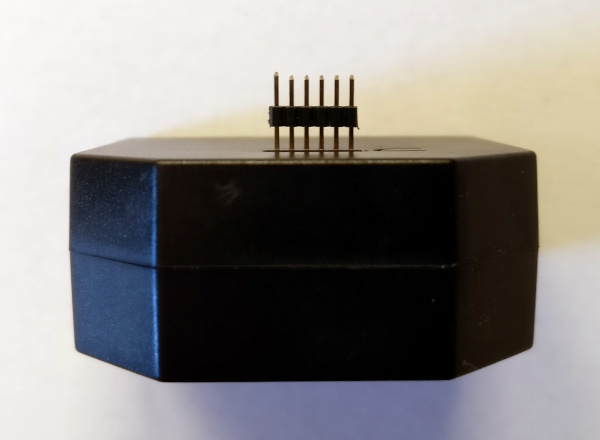

It now fits very well into the Shelly with the longer side of the pins.

It now fits very well into the Shelly with the longer side of the pins.

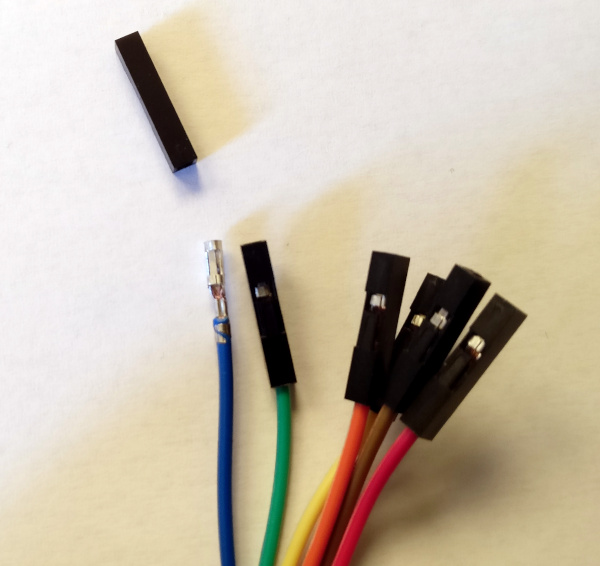

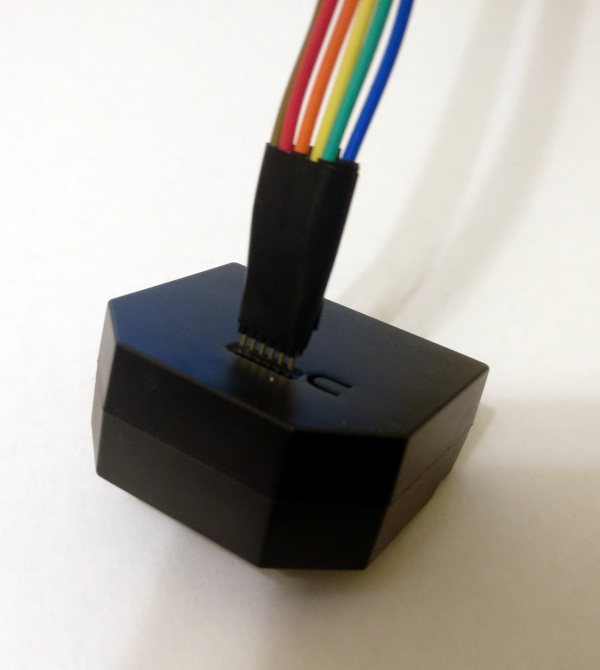

Second step is to strip the plastic part of one side of the jumper wires. Those are designed to fit 2.54mm pitch headers and won't work for our use case otherwise.

Second step is to strip the plastic part of one side of the jumper wires. Those are designed to fit 2.54mm pitch headers and won't work for our use case otherwise.

As the connectors are still too big, even after removing the plastic, the next step is to take some pliers and gently press the connectors until they fit the smaller pins of our header.

As the connectors are still too big, even after removing the plastic, the next step is to take some pliers and gently press the connectors until they fit the smaller pins of our header.

Now is the time to put everything together. To avoid short circuiting the pins/connectors, apply some isolation tape while assembling, but not too much as the space is really limited.

Now is the time to put everything together. To avoid short circuiting the pins/connectors, apply some isolation tape while assembling, but not too much as the space is really limited.

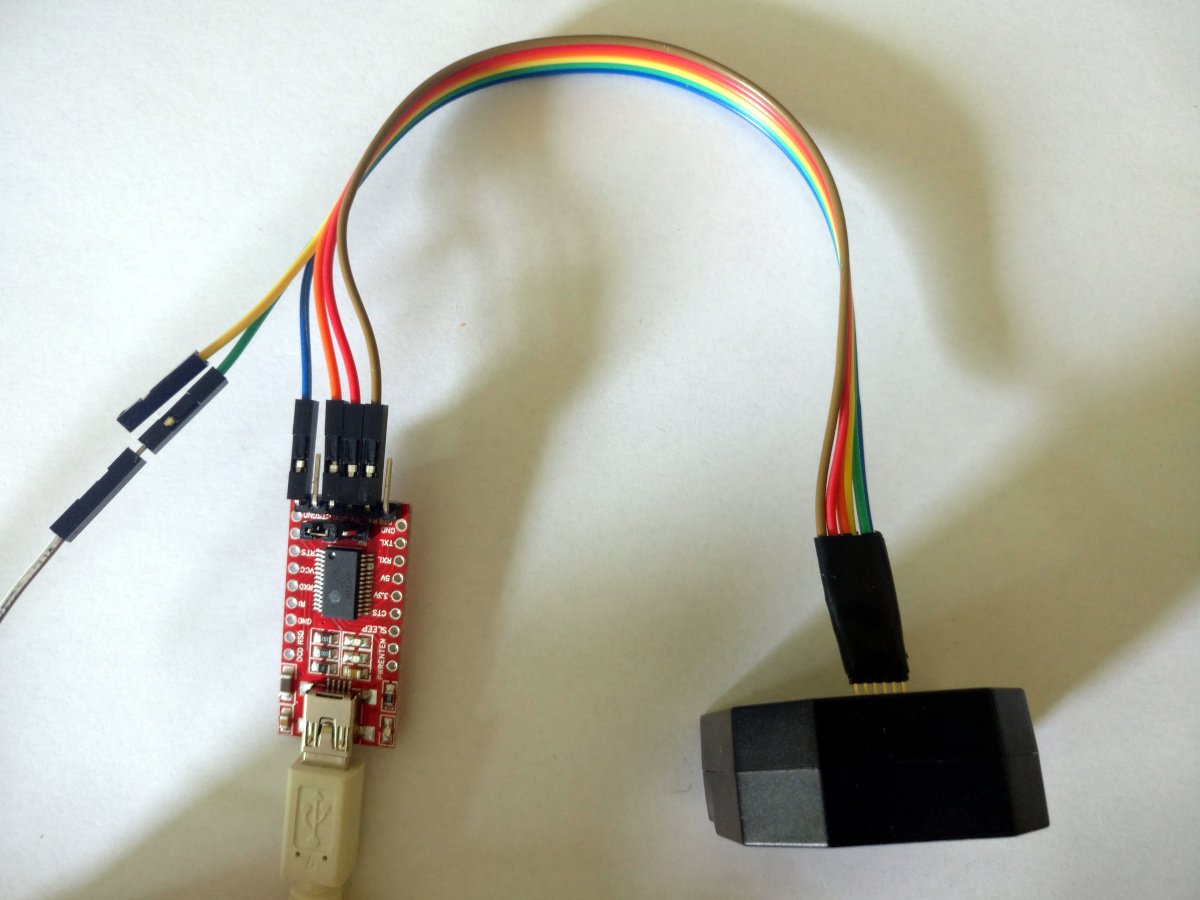

And we're done, a wonderful (lol) and working (yay) Shelly 2.5 cable that can be attached to any USB-TTL adapter, like the pictured FTDI clone you get almost everywhere.

And we're done, a wonderful (lol) and working (yay) Shelly 2.5 cable that can be attached to any USB-TTL adapter, like the pictured FTDI clone you get almost everywhere.

Yes, in an ideal world we would have soldered the header to the cable, but I didn't feel like soldering on that limited space. And yes, shrink-wrap might be a good thing too, but again, limited space and with isolation tape you only need one layer between two pins, not two.

Yes, in an ideal world we would have soldered the header to the cable, but I didn't feel like soldering on that limited space. And yes, shrink-wrap might be a good thing too, but again, limited space and with isolation tape you only need one layer between two pins, not two. Here is my monthly update covering what I have been doing in the free software world (

Here is my monthly update covering what I have been doing in the free software world ( As web search engines and IRC seems to be of no help, maybe someone

here has a helpful idea. I have some service written in python that

comes with a .service file for systemd. I now want to build&install a

working service file from the software's setup.py. I can override

the build/build_py commands of setuptools, however that way I still

lack knowledge wrt. the bindir/prefix where my service script will be

installed.

Solution

Turns out, if you override the

As web search engines and IRC seems to be of no help, maybe someone

here has a helpful idea. I have some service written in python that

comes with a .service file for systemd. I now want to build&install a

working service file from the software's setup.py. I can override

the build/build_py commands of setuptools, however that way I still

lack knowledge wrt. the bindir/prefix where my service script will be

installed.

Solution

Turns out, if you override the

I am happy to announce the release of OASIS v0.4.7.

I am happy to announce the release of OASIS v0.4.7.

OASIS is a tool to help OCaml developers to integrate configure, build and install systems in their projects. It should help to create standard entry points in the source code build system, allowing external tools to analyse projects easily.

This tool is freely inspired by Cabal which is the same kind of tool for Haskell.

You can find the new release

OASIS is a tool to help OCaml developers to integrate configure, build and install systems in their projects. It should help to create standard entry points in the source code build system, allowing external tools to analyse projects easily.

This tool is freely inspired by Cabal which is the same kind of tool for Haskell.

You can find the new release  A good amount of the Debian

A good amount of the Debian